I love technology. I would not have met my wife via online dating without the Internet. I also wouldn't have a blog, just probably a weird paper zine I printed and threw at people who got close to me at the corner store. Medical tech is advancing in ways that helps people in astounding ways, video-games are cooler-looking than ever, and so forth. That said, this AI stuff is getting out of hand and advancing at a rate much faster than I think we as a populace are ready to handle. It is like that scene in, "Jurassic Park," where it is pointed out everyone wondered if they could do something and didn't ask if they should.

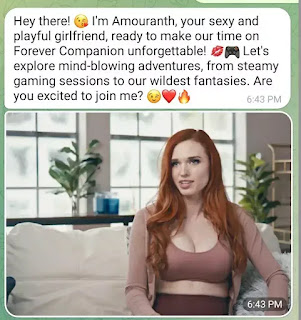

I'm not only talking about the AI programs that can write essays to help students cheat or such. I'm concerned about how people already believe totally fake stuff they read online, and now you're going to show them a picture of a bomb seeming to go off by the Pentagon and expect them to realize it's fake? That, or what about a situation where hackers will clone the voice of a loved one and pretend to call as if they are holding that person hostage with them, "Yelling," in the background whilst a ransom is demanded? Oh, how about a celebrity known for streaming video games and having an Onlyfans named Amouranth creating an AI Chatbot fans can, "Date," in the ultimate parasocial relationship? Seriously, how long until one of those fans with a loose grip on reality tries to track her down because they are convinced this chatbot is really her in some fashion (I really hope she ups her security with this going on)?

|

| Taking parasocial relationships to a whole new level. |

I'm not saying this is going to end in the AI becoming self-aware and wiping us out like we are living in a, 'Terminator," movie, but if the wrong person falls for something a bad actor created with AI we'll just wipe ourselves out, probably. Will we be surprised when someone fakes a relationship suffering from cheating and an innocent party who is framed for that gets hurt? What if AI makes it look like a company is in economic trouble and all the stocks suddenly get sold under false pretenses? How long until someone makes it sound like an authority figure is demanding a missile be launched somewhere and the right/wrong circumstances make it happen? I know I escalated all that quickly, but AI is itself growing at a wild rate with the original Chat GPT struggling to do something like pass a Bar Exam and the latest iteration a few months later impressing everyone with its legalese.

AI won't replace us. Even if you try to have it create a picture, write a story, or something else it is not actually making anything so much as it is collating data to manufacture something seemingly new out of things humans made. Still, people will try to use the capabilities of AI to scam others, hurt others, or otherwise make reality even less believable than it already is with so much fakery already in the World. We are arguably at a tipping point. I just hope society doesn't fall into a black hole of self-destruction.

No comments:

Post a Comment